Replicate

The serverless API for running and fine-tuning open-source AI models.

Pricing

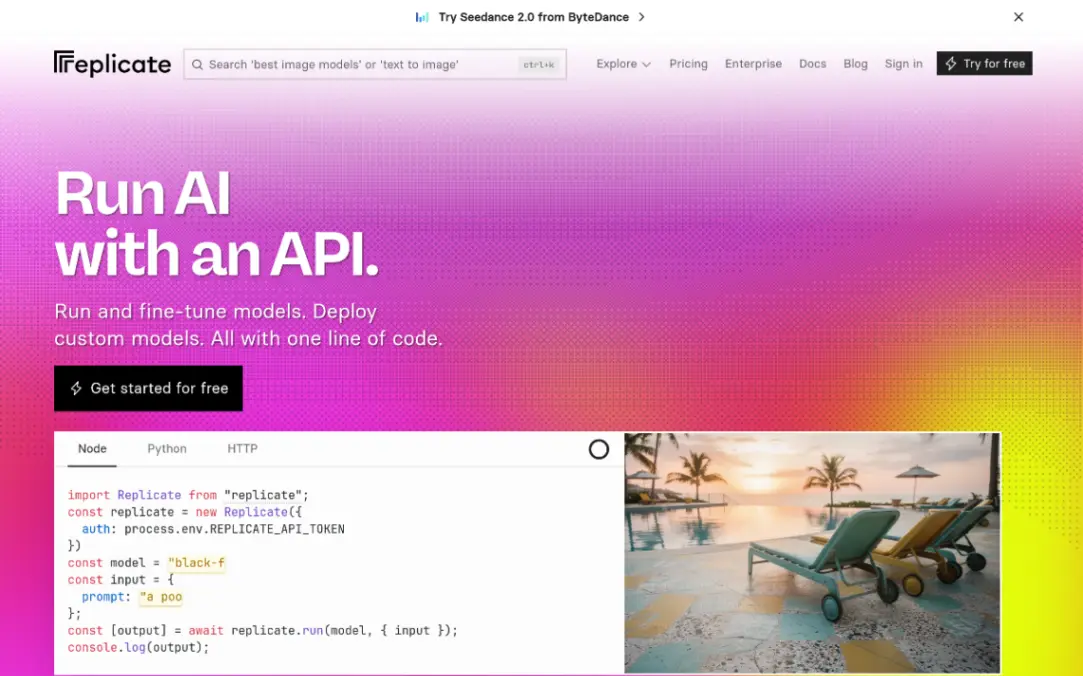

PaidWhat is Replicate?

Is This Tool Right For You?

✓ You are a developer who needs to integrate AI models (image, video, text) into an app without managing GPU infrastructure.

✓ You want access to the latest open-source models (like FLUX, DeepSeek, or Llama) through a unified API.

✓ You prefer a pay-as-you-go billing model over expensive monthly subscriptions.

✓ You need to fine-tune existing models on your own data with minimal configuration.

✗ You are a non-technical user looking for a chat interface (Replicate is primarily for developers).

✗ You have extremely high-volume traffic where dedicated on-premise hardware would be significantly cheaper than serverless inference.

Quick Verdict

In 2026, Replicate remains the gold standard for developers who want to move fast. It bridges the gap between complex research models found on GitHub and the production environments of modern SaaS applications. By abstracting away the nightmare of CUDA drivers, Docker containers, and GPU provisioning, Replicate allows you to run even the most demanding models—like FLUX 1.1 Pro or Claude 3.7 Sonnet—with a single line of code. While the serverless nature can lead to occasional cold starts, the sheer breadth of their model library and the fairness of their usage-based pricing make it an essential tool for any AI-driven product team.

What Replicate Does

Replicate is a cloud-based platform that hosts thousands of machine learning models and makes them accessible via a standardized API. Instead of renting a virtual machine, installing Python dependencies, and managing a Flask or FastAPI wrapper, you simply send an HTTP request or use their SDKs (Python and Node.js) to run a model.

The platform handles the heavy lifting of scaling. If you send one request, it spins up a container. If you send a thousand, it scales horizontally to meet the demand. Replicate supports a massive variety of modalities including text-to-image (FLUX, Stable Diffusion), Large Language Models (Claude, DeepSeek), video generation (Wan 2.1), and specialized tasks like image restoration, speech-to-text, and music generation. Beyond just running public models, Replicate provides the tools to fine-tune models on your own datasets, allowing you to create custom versions of popular architectures like FLUX or Llama that understand your specific brand or data requirements.

Key Strengths

Unified Developer Experience: Whether you are running a 70B parameter LLM or a small image-to-text model, the API structure remains consistent. This drastically reduces the learning curve when switching between different AI capabilities within your application. The provided Node.js and Python libraries are idiomatic and well-documented, making integration a matter of minutes rather than days.

Access to Cutting-Edge Open Source: Replicate is often the first place where new, state-of-the-art open-source models are deployed with a production-ready API. In 2026, their library includes heavy hitters like the FLUX series from Black Forest Labs, ByteDance's Seedance 2.0, and Google's Nano-Banana-Pro. This gives indie developers the same power as large tech companies without the R&D overhead.

Zero-Infrastructure Fine-Tuning: One of Replicate's most powerful features is the ability to train models. You can upload a zip file of images or a JSONL file of text, and Replicate will handle the training process on their high-end GPUs. Once finished, your fine-tuned model is immediately available via the same API as the public models.

Transparent Usage-Based Billing: There are no 'Pro' tiers or 'Enterprise' gatekeeping for basic access. You pay for the compute you use. For many models, this is calculated down to the millisecond of execution time or per individual output (like per image generated), which is ideal for startups that need to keep margins tight during the early stages of growth.

Real Use Cases

SaaS Founder building an AI headshot generator: Using Replicate's fine-tuning capabilities, a founder can allow users to upload 10-20 photos, train a custom FLUX model in the background, and then generate professional headshots—all triggered via API calls from their web app.

Marketing Team automating social media content: A marketing engineer can use the Recraft V3 or Ideogram v3 models on Replicate to automatically generate on-brand social media graphics whenever a new blog post is published, ensuring consistent visual style at scale.

Mobile App Developer adding video features: By integrating the Wan 2.1 i2v models, a developer can create an app that transforms static user photos into 480p or 720p cinematic videos, paying only $0.09 per second of generated video.

Enterprise Data Analyst summarizing vast archives: Using Claude 3.7 Sonnet or DeepSeek R1 via Replicate, an analyst can process millions of tokens of internal documentation to extract insights, benefiting from the high context windows and reasoning capabilities of these top-tier models.

Creative Agency restoring vintage photography: An agency can build an internal tool using Replicate's image restoration models to automatically upscale and de-noise historical archives for clients, charging a premium for a service that costs them cents to run.

Best For

- Early-stage startups that need to prototype and ship AI features without hiring a dedicated Machine Learning Engineer.

- Indie hackers who want to build 'wrappers' or specialized tools around open-source models with minimal upfront cost.

- Product engineers who prefer high-level abstractions and SDKs over managing raw GPU clusters and Kubernetes.

- Creative technologists experimenting with the latest generative AI models across multiple modalities (image, video, audio).

- Researchers who want a simple way to share their models with the world by hosting them on a platform that provides an instant API.

Who Should Look Elsewhere

If you are an enterprise with massive, predictable workloads that run 24/7, you might find that the convenience premium of Replicate starts to add up. In such cases, managing your own reserved instances on AWS or GCP might be more cost-effective. Additionally, if you need ultra-low latency (sub-100ms) for real-time applications, the serverless cold starts on Replicate can occasionally be a dealbreaker. For those users, a dedicated inference provider like Together AI or Groq might be a better fit because they specialize in high-speed, dedicated hardware for specific model architectures. Finally, if you are a non-technical creative who just wants to play with AI without writing code, platforms like Midjourney or ChatGPT offer a more polished user interface than Replicate's developer-centric playground.

Limitations

Cold Start Latency: Because Replicate is serverless, models that haven't been used recently may take several seconds to 'wake up' as the hardware is provisioned. This can result in a sluggish experience for the first user of a feature.

Pricing Complexity: While pay-as-you-go is fair, it can be hard to predict monthly costs when some models are billed by the second, some by the token, and some by the image. You must carefully monitor your dashboard to avoid surprise bills.

Dependency on Platform Stability: Your application's uptime is directly tied to Replicate's API. While they are generally reliable, any outage on their end means your AI features will stop working immediately.

Black-box Infrastructure: You don't have control over the specific hardware environment or the underlying optimization of the model inference, which might be a limitation for power users who want to tweak performance at the driver level.

Pricing Overview

Replicate operates on a pure usage-based model. You only pay for what you use, with no monthly subscription fees for the standard service. In 2026, the pricing is split into two main categories: hardware-based (time) and output-based (tokens/images).

Example Model Pricing (as of 2026):

- Claude 3.7 Sonnet: $3.00 per million input tokens and $0.015 per thousand output tokens.

- DeepSeek R1: $3.75 per million input tokens and $0.01 per thousand output tokens.

- FLUX 1.1 Pro: $0.04 per generated image.

- FLUX Dev: $0.025 per generated image.

- FLUX Schnell: $3.00 per thousand output images.

- Ideogram v3 Quality: $0.09 per generated image.

- Recraft V3: $0.04 per generated image.

- Wan 2.1 Video (480p): $0.09 per second of output video.

For models billed by hardware, the price-per-second varies depending on whether you are using a CPU, an Nvidia T4, or an A100/H100 GPU. You are only billed for the time the model is actively processing your request.

Pricing last verified: October 2026.

Our Assessment

After extensively testing Replicate throughout 2026, it is clear that they remain the leader in the 'AI-as-a-Service' space for developers. The sheer speed at which they integrate new models—often having them live within hours of a GitHub release—is unmatched. For a developer, the value proposition is simple: your time is more expensive than their compute. By spending $0.04 on a FLUX image instead of spending three days setting up a local ComfyUI server with a public endpoint, you are choosing to focus on your product's unique value rather than the plumbing.

The ease of use is world-class. The 'Playground' feature allows you to test prompts and parameters in the browser before ever writing a line of code, and it even generates the code snippets for you. While we have noted limitations like cold starts, Replicate has made significant strides in 2026 to reduce these through better predictive scaling. For any team building an AI-first product, Replicate isn't just a tool; it's a competitive advantage. It allows a single engineer to wield the power of a full ML department. The pricing is transparent and scales with you, making it as viable for a weekend project as it is for a Series A startup. If you can write a basic API call, you can build a world-class AI application on Replicate.

Top Alternatives

Hugging Face Inference Endpoints — Choose Hugging Face when you need to deploy a specific, obscure model from their repository on dedicated hardware that you control.

Together AI — Choose Together AI when you are primarily focused on LLMs and need the absolute lowest latency and highest throughput for models like Llama or Mistral.

Groq — Choose Groq when your application requires near-instantaneous text generation, as their LPU technology significantly outperforms traditional GPUs for inference speed.

Frequently Asked Questions

Q: Can I use Replicate for free?

Replicate offers a 'Try for free' option which usually includes a small amount of initial compute credit so you can test models in the playground. However, once those credits are exhausted, you must add a credit card and pay for continued usage.

Q: How do I fine-tune a model on Replicate?

You can fine-tune models like FLUX or Llama by providing a dataset (usually images or text) and using Replicate's training API or web interface. They manage the GPU orchestration, and once the training is complete, you receive a unique model ID that you can call just like any public model.

Q: Is my data private when using Replicate?

Replicate provides options for private models. While public models are contributed by the community, you can deploy your own models or fine-tunes that are only accessible via your API key. Always check their latest enterprise terms for specific data privacy guarantees.

Q: What programming languages does Replicate support?

Replicate officially supports Node.js and Python through dedicated libraries. However, because it is a standard REST API, you can use it with any language that can make HTTP requests, including Go, Ruby, Rust, or even cURL.

Q: How does Replicate compare to OpenAI's API?

OpenAI only provides access to their proprietary models (GPT-4, etc.). Replicate provides access to thousands of open-source models (FLUX, Llama, SDXL) as well as some proprietary ones. Replicate is much better if you want flexibility and the ability to use the latest open-source research.

Last reviewed: October 2026. Features and pricing are subject to change — always verify on the official website.

Screenshots

1/1

1/1Key Features

Pricing Plans

Claude 3.7 Sonnet

$0.015/1k output tokens

FLUX 1.1 Pro

$0.04/image

DeepSeek R1

$0.01/1k output tokens

Wan 2.1 Video

$0.09/sec

FLUX Dev

$0.025/image

FLUX Schnell

$3.00/1k images

Ideogram v3

$0.09/image

Top Replicate Alternatives & Competitors

Explore the best similar tools and how they compare.

High-performance AI-native cloud for open-source model inference and training.

User Reviews

0 reviews · 0.0 avg rating

Be the first to review Replicate

Help the community by sharing your honest experience with this tool.

Write a Review